Why MLOps Was the Decisive Factor

This case study focuses on deploying AI in a semiconductor manufacturing environment, where requirements around yield, reliability, traceability, and production sign-off are significantly higher than in typical software-driven AI use cases.

The goal was not experimental modeling, but supporting real production decisions such as process control, inspection analysis, and manufacturing readiness. In this environment, AI failures directly translate into cost, yield loss, or qualification risk.

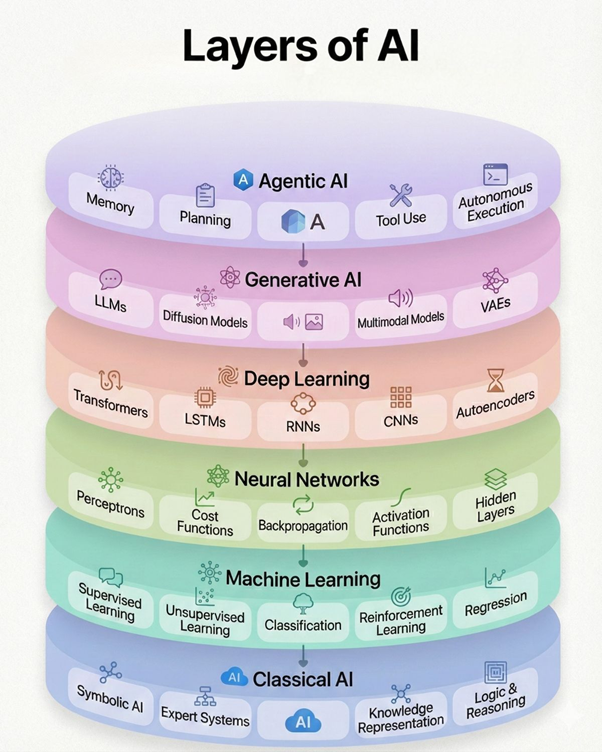

This view describes the evolution of AI capabilities, from classical AI to machine learning, deep learning, and modern generative and agentic systems. It explains what kinds of models exist, but not how they operate reliably in production.

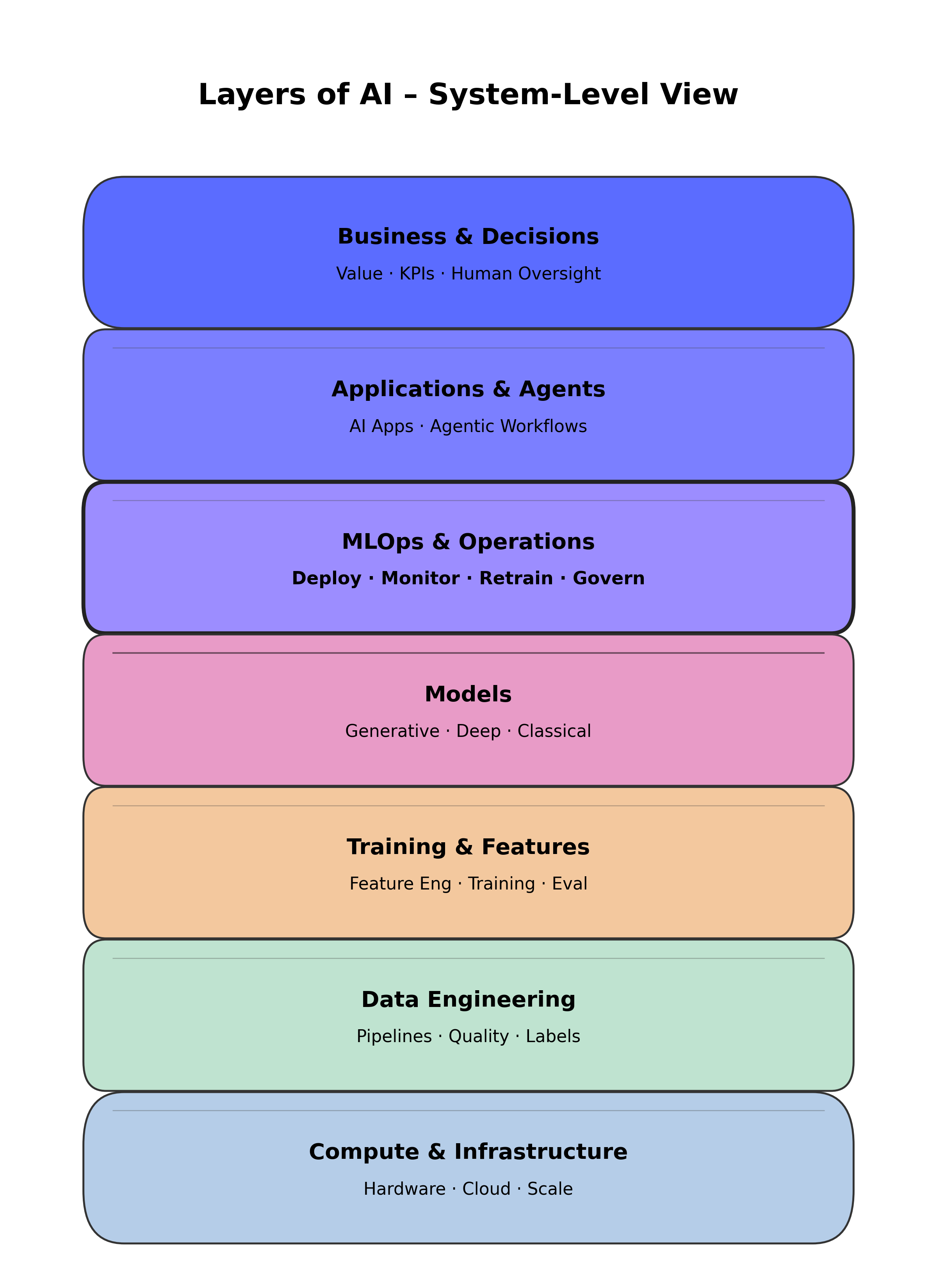

This system-level view represents AI as an engineered pipeline composed of infrastructure, data, training, models, operations, applications, and business decisions. It explains how AI delivers value in real environments.

In practice, most AI failures occur outside the model itself. Data changes, tool updates, process evolution, and deployment mistakes introduce silent risk.

MLOps enabled:

Without MLOps, AI remains a demo. With MLOps, AI becomes a production system.

The system ran on fab-compatible infrastructure, balancing compute availability, security, and latency. Predictable inference timing was critical to align with manufacturing takt time.

Manufacturing data came from inspection tools, metrology systems, and process logs. Pipelines handled schema evolution, tool changes, and strict data quality requirements.

Feature engineering focused on process-relevant representations. Training workflows emphasized repeatability, traceability, and stability over marginal accuracy gains.

Models were chosen for robustness and explainability. They were deliberately treated as replaceable components, not the center of the system.

This was the enabling layer that made AI acceptable in production. Monitoring, drift detection, controlled retraining, and versioning ensured ongoing validity as processes evolved.

AI outputs supported engineers with clear decision boundaries. Automation was constrained, and accountability was preserved through human oversight.

Success was measured by adoption, trust, and sustained reliability — not by offline model metrics alone.

Gradual tool and process changes caused distribution drift. Monitoring detected issues early, enabling retraining before yield impact occurred.

Tool metadata was versioned alongside data and models. Compatibility checks and rollback mechanisms ensured safe operation after maintenance events.

During node transitions, phased retraining and explicit model-to-node mapping enabled safe adaptation with engineering oversight.

“Building AI models is not the hard part in manufacturing. Making them trustworthy, traceable, and controllable is. That transition happens in MLOps.”